The argument on this subject consistently goes both ways. On one hand we have the machine learning and artificial intelligence technologists that would argue against human intervention. Then on the other hand, there are professionals that argue when machines handle event analysis alone, that too many false positives come forward (that is poor filtering, actually), or too many false negatives may consistently go undetected. The operational word in that last sentence being “consistently”. Consistency is where a machine outperforms the human counterpart, but the types of events to be analyzed is rather vast. Eventually, you could map out a massive logic flow but the more difficult challenge would be ongoing updates and management of such a workflow to stay abreast of changing technology, processes, people, and attack surfaces.

Without getting too scientific and diving into analysis of AI decision patterns by a human in order to improve AI machine efficiency, let’s explain a real life scenario:

An employee named Randy who has been with the company for 15 years and is an IP network and telephony expert dumps all of his contacts (which are mostly personal and professional friends) from his outlook into a CSV and sends the file to his personal email address on the last workday of the year before New Year’s vacation. In fact, Randy has done this often in the past as an offsite storage thing for all of his contacts. No leakage intended. The company policy is that all private information or confidential and above information must be at least zip encrypted before sending through the email system.

According to this policy, Randy did something non-compliant, but is it an incident? Does it need to be reported as an incident and get special attention? That’s open for debate, but even if it is an incident, this kind of event would be better handled with human review and confirmation. Furthermore, the incident handling would most likely be open and shut as well.

Workflows To the Rescue

There are many other similar and different scenarios that come up in DLP and overall event reporting systems, so that ultimately a combination of enforced workflow and human confirmation is the best solution.

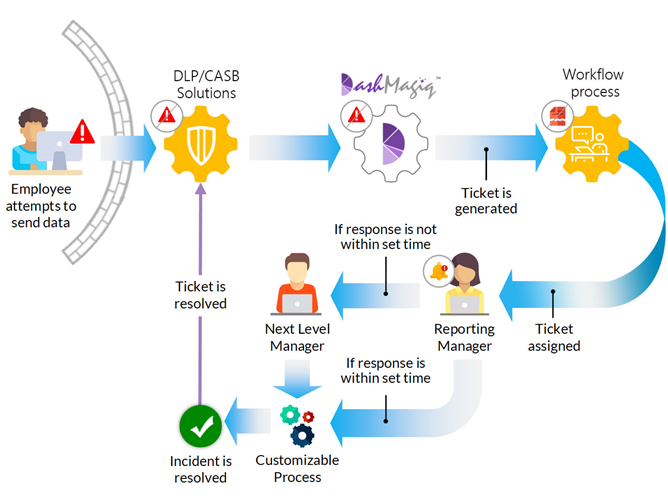

In the above scenario, after the DLP/CASB detects a potentially non-compliant email leaving the network parameter, an alert is issued, the email is quarantined, then a security team member is informed in one step. Without automation, the security team will have to contact the reporting manager and get confirmation, then decide if the response timing is compliant. If not, contact the next level manager and obtain review and approval. Such a process could take a day, or even a couple days. With the DashMagiq orchestration and automation solutions, the workflow can be customized for your specific company or industry requirements, then the security reviewer and reporting manager can be sent an alert mail simultaneously; allowing two interested parties to review the event simultaneously and respond much quicker. This would happen in minutes and reduce time spent on such an event by security staff, until the events become real issues or escalated to an incident.

While the above process clearly requires human intervention, the tasks related to reviewing the DLP event are pushed and workflow is enforced. This eliminates a middleman between the DLP system notices and reviewing managers, or in larger environments, the number of events could require a team without automated workflow.

Other Applications of Workflow In Cybersecurity

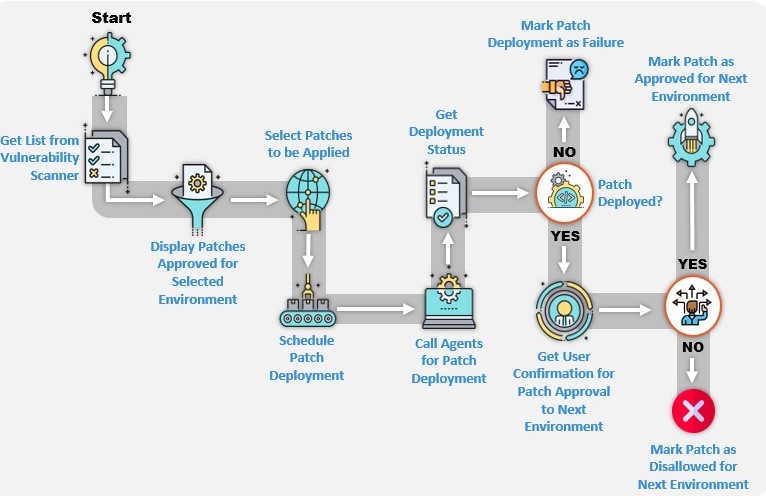

The diagram above shows a typical patch management workflow with automated integration to dashboard display outstanding patches from integrated vulnerability scanner data. This is how patches are supposed to be managed and long gone are the days of complex, shared Excel spreadsheets to track patches. The advantages – automated notifications of approvals/requests, rule-based workflow, and shared dashboard views – of automating workflow within cybersecurity processes that are clearly becoming a frontline mission becomes quite clear. The DashMagiq orchestration and automation solution provides this kind of automation by simply scripting the rules/policies. In the end, however, we are tracking unpatched systems and need a one-shot report regularly updated to execute effectively.

Evidence Recording

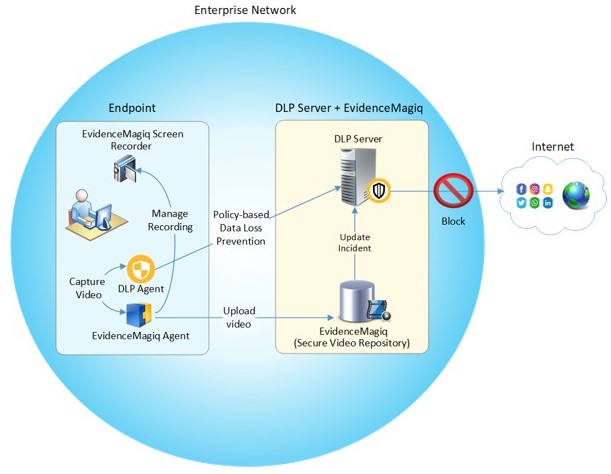

Many people have mixed feelings about event recording applications in cybersecurity monitoring, but one thing holds true, it needs to be done in secure, higher risk environments. In my humble opinion, there should be no expectation of privacy if you are using company tools. Event recording does help the incident management process when higher risk events are identified, such as exfiltration in process from a certain employee terminal, or multiple files sent via outbound email. This evidence supports the incident handling so that most analysis may be limited to login times and event recorder review.

The challenge in this implementation is the security for data-in-transit and the data-at-rest and ensure both are encrypted (access control and non-repudiation), and access to the screen recording mechanism is access controlled. As you can see in the layout above, while DashMagiq Evidence Recorder meets all the data handling and storage requirements, DashMagiq also offers a DLP agent to start screen recording when a certain policy-based leakage event have occurred.